Deep Engineering #32: Richard D. Avila on The Rise of the AI Architect

Operational AI: evaluation, monitoring, and controlled rollout.

Is your source code training someone else’s AI?

On February 3 at 11:00 AM PT, we’ll introduce Cyberhaven’s data lineage powered and unified DSPM and DLP platform. You’ll see how one AI-native solution can finally keep up with the way data really moves. Be among the first to see how a unified DSPM and DLP platform can change how your organization protects its most important data.

✍️From the editor’s desk,

Welcome to the 32nd issue of Deep Engineering!

Engineering teams today are being asked to operationalize systems whose behavior cannot be fully inferred from source code. Once a model sits inside a product workflow, the work shifts to measurement, monitoring, and change control: what the system does today, what it did yesterday, and what it will do after the next model update.

AWS’s Generative AI Lens (updated Nov. 19, 2025) puts operational requirements—traceability, monitoring, automation of lifecycle management—on the same footing as security and reliability for AI systems. The accompanying architecture note describes expanded material on responsible AI, data architecture, and agentic workflows, reflecting what teams are now deploying in practice.

If an organization cannot evaluate systems rigorously, it cannot claim to operate them reliably. OpenAI, in a Nov. 19, 2025 post, centers evaluation as an operational discipline for business deployments: setting measurable targets, tracking performance shifts, and making decisions about release readiness. Anthropic’s model reporting, updated Dec. 4, 2025, treats capabilities and safety evaluations as documentation that must be maintained alongside deployment safeguards. Dependencies can and will change on a schedule outside any single team’s control; regression testing and rollback planning must become part of normal operations.

Today’s feature sits in this environment. We have collaborated again with Richard D. Avila who has over two decades of experience building complex software and AI systems and has led architecture for everything from large-scale simulations and autonomous platforms to data analytics systems. Avila, along with Imran Ahmad has also co-authored Architecting AI Software Systems. The “AI architect” Avila describes in today’s article is a role shaped by post AI production realities and a function we predict will become the cornerstone of successful software engineering teams.

🧠Expert Insight:

The Rise of the AI Architect by Richard D Avila

In Richard D Avila’s previous article, he pointed out that building an AI prototype in the lab is one thing, but turning it into a dependable product is quite another. This is where AI Architecture and the role of the AI architect becomes relevant. Much like a software architect ensures a system’s structure can support its goals, an AI architect is emerging as the key figure to bridge cutting-edge AI with solid engineering. Google, for example, recently appointed Koray Kavukcuoglu (formerly of DeepMind) as its first Chief AI Architect to accelerate the integration of advanced models “into our products, with the goal of more seamless integration, faster iteration, and greater efficiency”. Without sound architecture, AI innovations won’t translate into sustainable, reliable solutions. But who is an AI Architect and what do they do?

AI systems and technology are very quickly impacting so many areas of modern life. Whether it be a seemingly simple task, such as detecting a cat in an image, or the autonomous operation of a vehicle. There is also a shift toward greater acceptance of AI technologies into society. AI systems are primarily built into complex software. What makes AI-enabled systems unique is that they have, at their heart, the notion of decision making. This extended functionality puts new demands on the processes and guides for building these sorts of systems.

Architecting fundamentals can help navigate these complexities and the successful building and operation of these sorts of systems. Historically, an architect would aid in the building of a system and then would be able to sort of “walk away” or execute a new project. In this age of AI, this is simply not the case. The challenges of observability, integrated development, testing, the voice of the user, and their centrality to business outcomes place new demands on an architect.

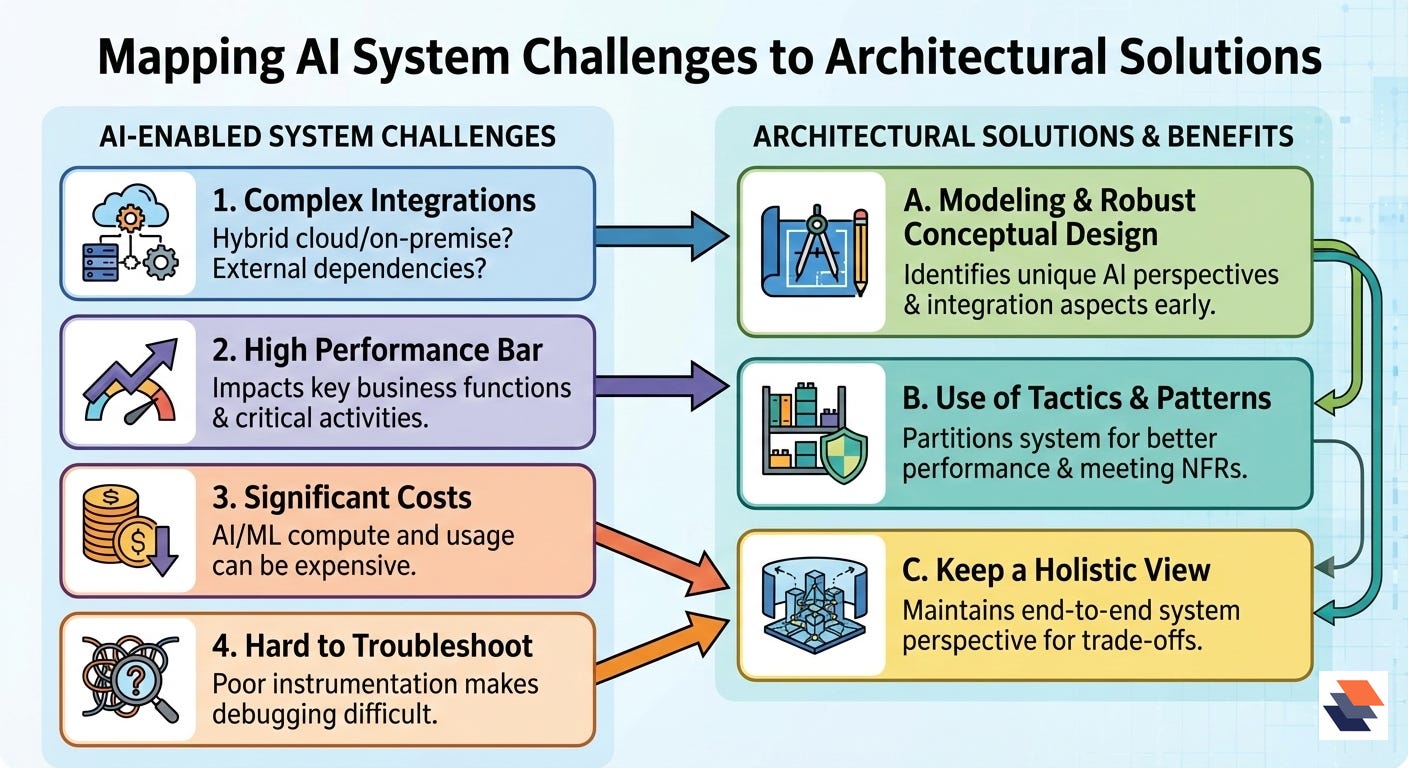

Challenges that impact AI enabled systems

Complex integrations: For example, is the system going to be a hybrid cloud or on premise deployment? What dependencies exist on external software?

High performance bar: the system is expected to be deployed to impact key business functions or activities that the organization shall depend upon.

Depending on the technology, using the AI/ML can incur significant costs.

If not well architected or instrumented, the system can be a challenge to troubleshoot.

What can architecture do to help?

By modeling and conducting robust conceptual designs, the unique perspective and aspects of the AI technology are identified.

The use of tactics and patterns partitions the system for better performance and meeting non-functional requirements.

Robust architecting helps keep a holistic view of the end to end system.

The Rise of AI Architects

As modern technology progresses, the practice of architecting also evolves. We are now witnessing the rise of the AI architect. The modern AI architect will be closer to operations and must help with managing the complexity of systems. These include:

The architect is the principal approver for the completion of acceptance gates for going from an AI prototype to a production deployment. He ensures the concept has merit, evaluates the results of the prototype, guides design of the production model, evaluates the performance of prototypes, and finally accepts the production deployment.

They integrate and synthesize solutions to balance the data science, data engineering, software development, operations, and business teams.

The architect is the principal owner of the non-functional requirements and interfaces across the AI system.

They must be involved in the observability, where they need to understand what decisions the software is making.

From Idea to Production Life cycle

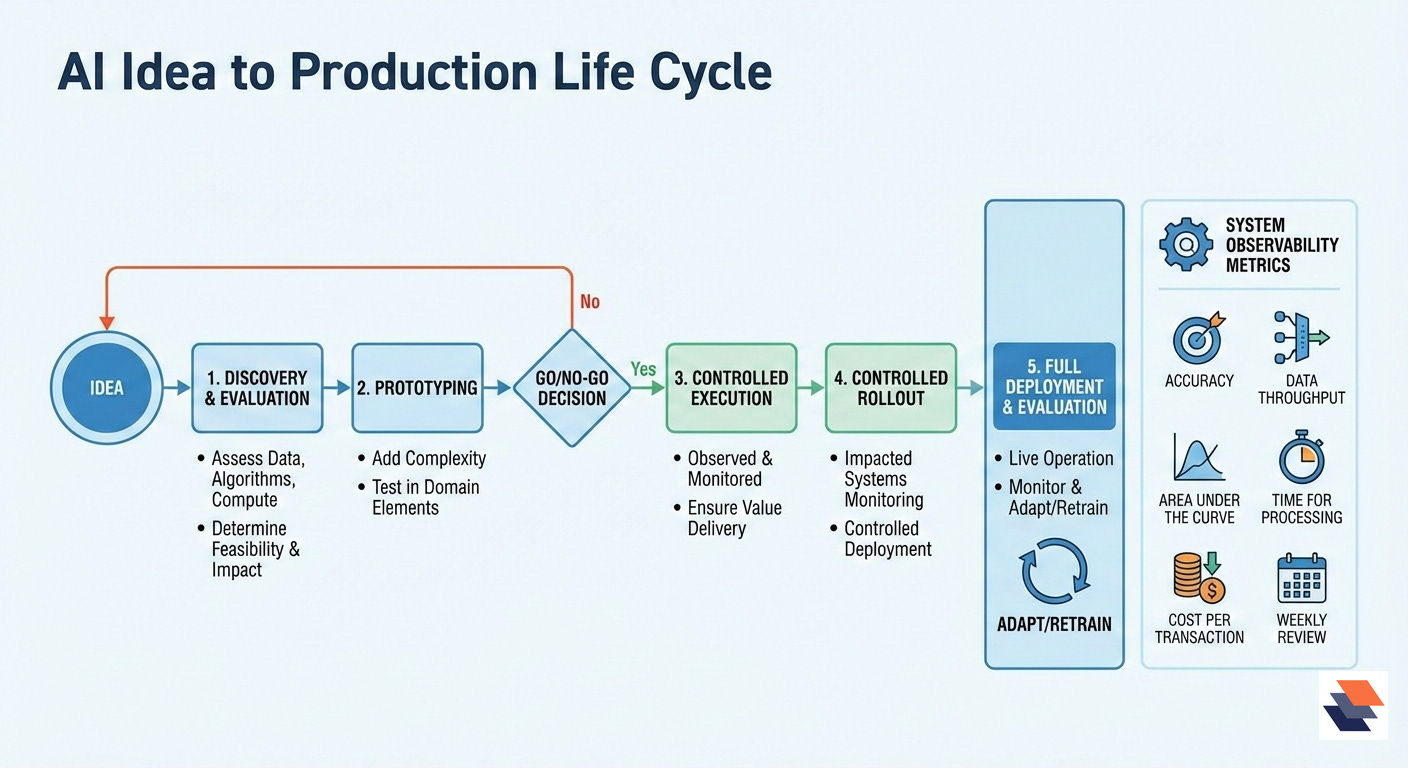

As AI engineering is maturing, a notional process is emerging that takes an AI idea to production. The initial stage is discovery and evaluation. It looks to determine if the data, algorithms, and compute resources exist to make a system that will have impact. The next stage is prototyping; here one now adds complexity to the software, both by adding more production domain elements, to test how well the new system would work. It is at the end of the prototype phase where a key decision is made to determine if it is warranted to take the prototype to a production environment.

With the passage out of the prototyping phase, a controlled execution of the model is done, where it is observed and monitored to ensure it is working as expected and delivering value. With the monitoring phase complete, a controlled rollout of the system is done to production, with another layer of monitoring to ensure the systems it is now impacting still also function as expected. Finally, with these gates completed, a full deployment can be done and now the system goes into a monitor and evaluation phase. The evaluation is to see if the model needs to be adapted or retrained.

One key item is as the system is now in operation – a new set of metrics for system observability need to be captured. Below are some basic ones; this should not be considered exhaustive:

Accuracy

Data throughput

Area under the Curve

Time for processing

Cost per transaction

How often these metrics should be reviewed really depends on how time dependent AI performance is to the enterprise. That said, these observability metrics should be reviewed frequently by the operations teams, and on a weekly basis or sooner by the other stakeholders, to see how AI model performance is impacting their areas of responsibilities.

Specific Areas an AI architect can Influence

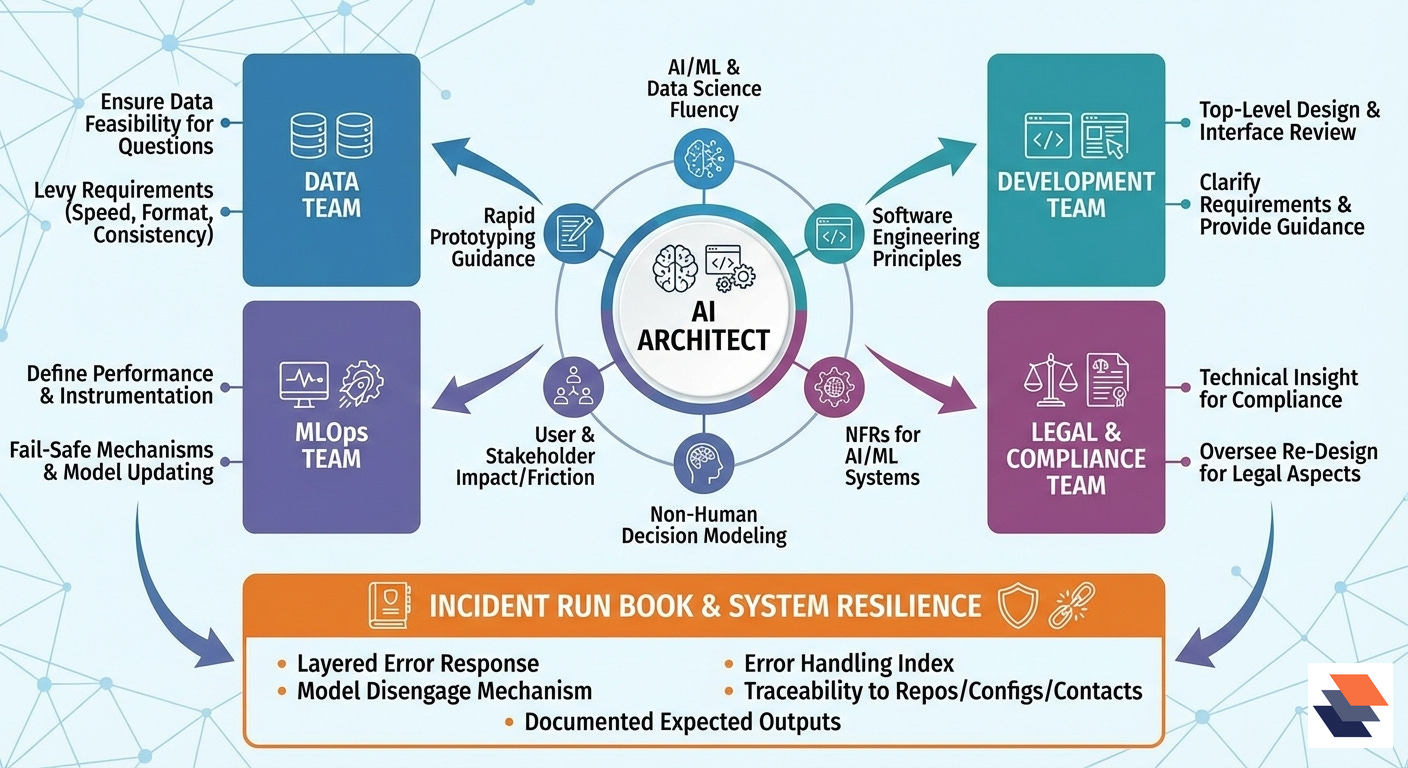

As has been mentioned, the AI architect needs to have a solid understanding of, and be able to communicate in the language of, AI/ML and data science topics. This includes the perspective in understanding the role of non-functional requirements as applied to AI/ML systems. How to extend their knowledge for architecture modeling should incorporate systems where there is to be a sort of non-human decision making. They will also need to fortify and understand how users and stakeholders of the system are to be impacted, aided, and potential friction points. They will need to be close to guiding and evaluating the rapid prototyping in support of software development. The architect needs to consider interfaces and how their management will impact the decision making of the system. The use of AI/ML technologies inherently add a layer of complexity. The architect acts at his own peril with the notion that a simple AI application can readily be made in a less rigorous manner. Adding a layer of AI into almost any application raises the complexity significantly.

The AI architect must by necessity straddle AI/ML and software engineering. This is to ensure the architect can navigate the alphabet soup of techniques, algorithmic aspects, and technologies that exist. It is very easy for an AI architect to be overcome by the various other roles needed to deploy modern AI systems. In many enterprises, there are typically several functional teams: Data Team, Development Team, ML Ops, Legal/Compliance. Below are some of the major facets that the architect influences and needs to be involved with:

Data Team

The architect works with the data team to ensure that the product question can indeed be answered by the data that exists or is to be used. He is pivotal in levying requirements for the speed, format, and consistency checks that must be met.

Development Team

The architect works to ensure top-level designs, interfaces, and non-functional requirements are being met by the designs being created. He is more in a reviewer mode. He also aids in clarifying engineering requirements and providing guidance to address design questions and challenges.

MLOps Team

The architect, in this role, defines how well the production system is required to perform, and how the system is to be instrumented and monitored for correct execution. He will also define the canaries and fail-safe mechanisms to ensure issues with the AI components do not compromise the full production environment. The mechanisms for model and system updating are also laid out by the architect.

Legal and Compliance Team

In this capacity, the architect provides the technical insight, design guidance, and evidence that the AI system is meeting the legal and compliance needs of the enterprise. The architect also oversees re-design activities and troubleshooting to ensure legal and compliance aspects are indeed met.

Incident Run Book

There needs to exist a run book that the architect is a principal in its development. A run book should look to use a layered manner to address an error in the AI model or its interfaces. Clear mechanisms and architecture need to exist to be able to disengage the model from a production system, and put the production system into a nominal configuration. The run book should also provide for clear expected outputs of the different stages of the system – that is, it should be documented what “correct” looks like and why at each stage to enable quicker troubleshooting. The error handling within the system should also be readily indexed in the run book so that system, data, and model tracing can occur. Finally, the run book should also include clear traceability to software repositories, configuration information, and points of contact.

Conclusion

These are exciting times for being an AI architect. The field is in its infancy and there are many excellent and challenging domains where one can make a significant impact. In this new era one needs to accept that there will be constant learning and adaptation. As the techniques and concepts of AI/ML continue to increase, the combinations and applications simply keep growing. That said, having a solid foundational knowledge of AI/ML concepts shall mitigate short-term knowledge gaps. Are you ready to become an AI architect?

🔍In case you missed it…

🛠️Tool of the Week

OpenTelemetry Collector — Vendor-neutral telemetry pipeline for traces, metrics, logs, and profiles

The OpenTelemetry Collector is an open-source service that sits between your applications and your observability backends, receiving telemetry, processing it (filtering, sampling, enriching), and exporting it in a controlled, consistent way. It’s designed to reduce the sprawl of per-vendor agents while keeping the data path configurable and auditable.

Highlights:

Pluggable pipelines you can reason about: Collector configs are explicitly built from receivers, processors, exporters, and connectors, enabling transparent ingestion → transformation → delivery flows instead of opaque agents.

Unified multi-signal collection: One codebase can handle traces, metrics, and logs (and supports profiles via components), allowing teams to consolidate telemetry paths and apply consistent policy controls. (open-telemetry.

Production-grade release cadence and governance: The Collector shipped v1.49.0/v0.143.0 on 05 Jan 2026, with published breaking changes and end-user changelogs—useful when you operate it as shared infrastructure.

📎Tech Briefs

Scaling PostgreSQL to power 800 million ChatGPT users: Details how OpenAI scaled PostgreSQL for ChatGPT/API workloads—using a single primary with large-scale read replication and hard-earned operational lessons from overload-driven SEVs.

Route leak incident on January 22, 2026: Cloudflare’s post-incident report explains how an automation/policy misconfiguration leaked IPv6 routes for ~25 minutes (triggering congestion/loss), and lists concrete guardrails they’re adding in automation and validation.

What’s new with Google Cloud— January 24, 2026: Google Cloud’s weekly roundup compiles recent product updates, announcements, and engineering resources in one place (a useful “diff feed” for platform teams tracking change).

Power agentic workflows in your terminal with GitHub Copilot CLI: GitHub introduces Copilot CLI, positioning the terminal as a first-class surface for agentic developer workflows rather than a thin wrapper around the IDE.

Headlamp in 2025: Project Highlights: The Headlamp maintainers recap what shipped across 2025 and what they’re prioritizing next—useful signal for platform orgs standardizing cluster UX and operational visibility.

That’s all for today. Thank you for reading this issue of Deep Engineering. We have some really exciting things planned for Deep Engineering members in 2026 and we can’t wait to tell you all about it very soon.

We’ll be back next week with more expert-led content.

Stay awesome,

Divya Anne Selvaraj

Editor-in-Chief, Deep Engineering

If your company is interested in reaching an audience of developers, software engineers, and tech decision makers, you may want to advertise with us.