Deep Engineering #46: Jim Ledin on Modern Computer Architecture and the AI Infrastructure Layer

Memory bandwidth, GPU trade-offs, and the infrastructure decisions that determine whether AI systems are resilient up in production

View the latest HubSpot Developer Platform updates in Spring Spotlight

See what’s new for the HubSpot Developer Platform!

Ship faster with AI coding tools like Cursor, Claude Code, and Codex. Build MCP-powered AI connectors, run serverless functions with support for UI extensions, and use date-based versioning to streamline roadmap planning.

✍️ From the editor’s desk,

Welcome to the 46th issue of Deep Engineering!

This week, InfoQ analyzed what it actually took for Cloudflare to run large language models efficiently on their global network. The team built a custom inference engine called Infire from scratch in Rust, split model processing into two separate hardware stages because a single machine could not handle both efficiently, and compressed model weights by 15 to 22 percent to reduce what GPUs need to load and move during inference. The reason they had to do all of this is the same one that matters to every engineering team building AI systems: the hardware layer is not an abstraction you can ignore. It is the constraint that every other architectural decision is made around.

This pattern, where standard approaches to running AI workloads break down under real production constraints and the fix requires going back to the hardware layer, is one most engineering teams will eventually encounter. The engineers who avoid it are the ones who understood the hardware constraints before they started building, not after they hit them. Cloudflare’s engineering blog post goes into the technical detail for teams who want to dig further.

This week we are featuring Jim Ledin, CEO of Ledin Engineering and author of Modern Computer Architecture and Organization, now in its third edition published by Packt. Ledin has over thirty years of experience working on embedded systems, safety-critical hardware, and cybersecurity. In this issue he breaks down what engineers building AI systems get wrong about the hardware layer and why it costs them.

Let’s get started.

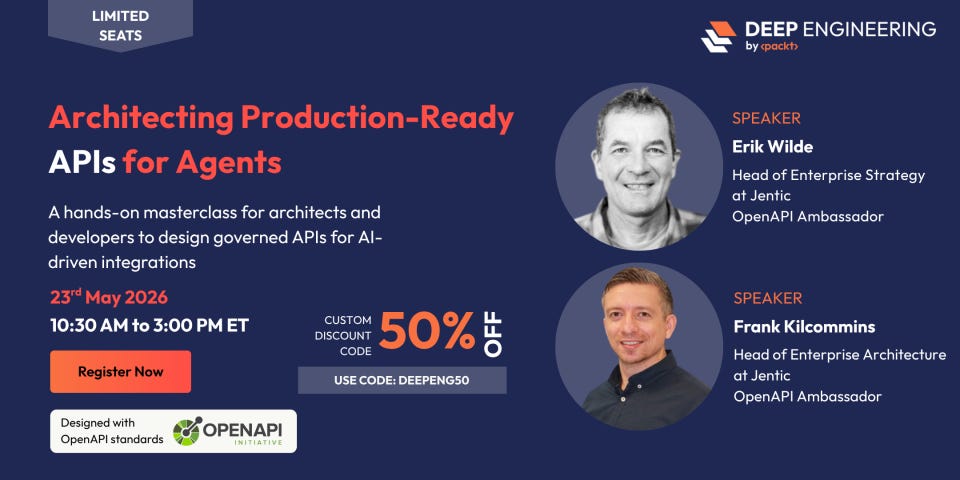

Architecting Production-Ready APIs for Agents

Most API ecosystems were not built for autonomous agent usage. This hands-on masterclass covers governed API design, OpenAPI specifications, and multi-API workflow modelling with Arazzo so your platform stays predictable and safe under automated usage.

2 FOR 1 deal is also live. Bring a colleague free and learn how to design AI-ready, governed APIs

Use code DEEPENG50 for 50% off.

Expert Insights

Hardware Is Not Someone Else’s Problem

For most software engineers working in the cloud, hardware is an abstraction managed by someone else. You provision compute, write code, deploy, and pay the bill. What happens between the instruction and the silicon is not your concern. That assumption has always had a cost. In an AI-accelerated world, that cost is becoming visible in ways that are harder to ignore.

Jim Ledin, CEO of Ledin Engineering, has been working at the boundary where software meets silicon for over thirty years. His entry point into computer architecture was not a formal computer science curriculum. It was a Commodore 64, a joystick, and a drawing program so slow you could watch it move one pixel at a time.

“That episode really cemented for me how important it is to understand what is going on in the hardware of a system, and not just write what you want to do in your favourite language,” Ledin reflects.

He rewrote the inner loops of that drawing program in 6502 assembly, poking opcodes directly into memory, and the line shot across the screen faster than he could see. The lesson stayed with him across thirty years of embedded systems work, electric vehicle software, and cybersecurity testing on safety-critical hardware. Understanding what the hardware is actually doing is not an optimization exercise. It is the difference between software that works and software that works reliably under real constraints.

That distinction matters more now than it ever has, because the hardware layer is where most AI system performance problems actually live, and most of the engineers building those systems have never had to care about it before.

Where the GPU consensus breaks down

The idea that GPUs are the right architecture for AI workloads has become so widely accepted that most teams treat it as settled. Ledin’s view is more specific, and the specificity matters. For local and personal use, running models on a consumer GPU like an Nvidia RTX 4090, GPUs are the right choice. For large-scale deployments running the largest models, the picture is different.

The distinction comes down to what GPUs were actually designed to do. The “G” in GPU stands for graphics, and consumer GPUs still carry silicon dedicated to real-time video generation and gaming workloads. TPUs, by contrast, are built entirely around the tensor operations that dominate AI model processing. At least 80% of the execution time in a transformer-based model is matrix multiplications, and TPUs concentrate every transistor on exactly that work.

The more pressing constraint, though, is memory bandwidth. “AI workloads are becoming increasingly memory bandwidth limited. That means it is taking more time to bring data into the GPU or TPU memory than it is taking for the computation itself to complete,” Ledin explains.

This is the reason high-end AI systems use high bandwidth memory, or HBM, stacked RAM modules with far higher data rates than anything available on a consumer GPU. “It is also,” Ledin notes, “part of why DDR5 is becoming harder to find. Production capacity for memory is increasingly going into HBM modules for AI infrastructure rather than into consumer components.”

And so, for engineering teams choosing hardware for AI deployments, the implication is concrete: the GPU consensus is correct for a specific part of the problem space, and incomplete for the rest of it.

Data movement is the real cost

The performance conversation in AI engineering tends to focus on compute: cores, clock speed, parallelization. Ledin redirects it toward something that gets less attention and causes more problems.

“Data movement can often be more expensive than the actual computation steps. The latency of moving large data structures across different levels of the memory hierarchy can dominate and leave a lot of compute bandwidth idle,” he emphasizes.

This is not a new insight in systems engineering, but it is one that most application developers have never had to internalize because the abstractions they work with hide it. In a modern PC, reading a single byte from DRAM causes 64 bytes to be transferred into the CPU cache. If the code then bounces to other memory locations, causes those to be loaded into cache, and pushes that first block out, the next access to that original data requires fetching it again from DRAM. The latency compounds across every cache miss, and in AI workloads operating on large data structures, those misses accumulate fast.

The practical recommendation follows directly. Iterating across large data structures multiple times in an algorithm should be avoided wherever possible. Working through memory linearly, in a way that keeps recently accessed data in cache rather than evicting it, is the single most impactful optimization available to most AI system code. It does not require a new framework or a different hardware platform. It requires understanding what the hardware is doing with the data you give it.

In cloud environments, this understanding has a direct financial translation. “You are paying for the usage of the system whether the CPU is actually crunching instructions or sitting idle waiting for a data item to come in from memory,” Ledin warns. This is because inefficient memory access patterns do not just slow down a system. They inflate the bill for it.

When abstraction becomes the problem

Abstractions are one of the most effective tools available to software teams. They accelerate development, limit mistakes, and allow large teams to work on complex systems without every engineer needing to understand every layer. Ledin does not dispute any of this. His concern is more specific: abstractions that obscure hardware costs, in performance-critical applications, are not just unhelpful. They actively create risk.

“Where it becomes dangerous is when abstraction obscures what is happening with the data layout in memory and the execution patterns, basically how the processor is interacting with data as the algorithm proceeds,” he cautions.

The failure mode is not that abstractions break. It is that they make costs invisible until those costs produce an incident. An engineer works within an abstraction layer, the code looks correct at that level, and the performance problem lives underneath it in a layer the abstraction was designed to hide. By the time the problem surfaces in production, the context needed to diagnose it is buried.

Ledin’s recommendation is a two-layer design. Use the most expressive code at the edges of the system, where the abstractions are doing the most valuable work. Use performance-aware code in the core, where the hardware interaction is most consequential. The boundary between those layers is not fixed, and finding it requires benchmarking rather than intuition. But knowing the boundary needs to exist is the starting point. Teams that treat the expressive outer layer as the whole system tend to discover, under load, that the core was never designed for the hardware it runs on.

The CPU versus GPU distinction, for engineers who have never had to care

Most senior software engineers working today have built careers without ever needing to think about the difference between a CPU and a GPU. That is changing, and Ledin’s framing of the distinction is the most useful one available for engineers coming to it for the first time.

A CPU is optimized for low-latency execution of complex branching code. It is built to handle conditional logic, to predict branches and recover when predictions are wrong, and to minimize the latency cost of that work. A GPU is optimized for high-throughput execution of linear code across massively parallel workloads, and it works best when it is running the same instruction across thousands of data streams simultaneously with as little branching as possible.

The implication, therefore, for algorithm design is practical. “The GPU only really becomes attractive when you have enough work for it to do that it can be parallelised, and enough that it will amortise the costs associated with moving data onto the GPU, launching the kernels to execute the code, and doing the management work to transfer data to and from the GPU,” he points out.

That last point is the one most teams miss. A GPU is not a general purpose computer. It cannot run a program on its own. It needs to be started and managed from a CPU, and the overhead of moving data onto the GPU, scheduling the kernels, and moving results back is real. If the workload is not large enough and parallel enough to amortize that overhead, the CPU implementation wins, not because GPUs are slow, but because the cost of using them correctly exceeds the benefit for that specific workload.

Knowing where that line sits, for a specific algorithm running on specific hardware, is the kind of judgment that requires understanding what the hardware is actually doing. It cannot be read off from a benchmark or inferred from a framework’s documentation. It comes from the same place Ledin’s understanding came from: going one level deeper than the abstraction, and learning what happens when the instruction meets the silicon.

In case you missed

Here’s the full Q&A with the interview video featuring Jim Ledin.

Computer Architecture in an AI-accelerated World with Jim Ledin

Jim Ledin has been thinking about what happens between the instruction and the silicon for over thirty years.

If the hardware layer argument resonates, the article below by Lee Peterson, VP of Secure WAN Product Management at Cisco, covers the same constraint from the networking and distributed compute angle.

Agentic AI Is Redefining Edge Infrastructure

Artificial intelligence is entering a new phase with agentic AI, where autonomous systems perceive, decide, act, and learn without constant human oversight, operating independently across distributed environments while collaborating with other agents in real time.

🛠️ Tool of the Week

vLLM - high-throughput, memory-efficient inference and serving engine for large language models

Cloudflare referenced it as the baseline they benchmarked their custom Infire engine against when building hardware-optimized inference at scale.

Highlights:

PagedAttention eliminates the memory waste that causes most GPU out-of-memory failures in production inference.

Continuous batching processes requests in a dynamic stream rather than static batches, keeping GPUs saturated under real load.

Disaggregated prefill/decode runs compute-bound and memory-bound stages on separate hardware for better throughput.

Supports tensor parallelism, FP8 and NVFP4 quantization across multi-GPU deployments.

📎 Tech Briefs

Inference gives AI chip startups a second chance - Disaggregated inference, splitting prefill and decode across purpose-built silicon, is making GPU-only inference architectures look like the wrong default for large-scale production deployments.

OpenAI releases MRC for AI training networks - OpenAI’s MRC shows frontier training now depends on failure-tolerant network design, making the interconnect layer a first-class engineering constraint rather than an infrastructure afterthought.

Anthropic opens Claude Security public beta - Claude Security moves vulnerability scanning closer to code review, triage, and patch creation, shifting security work earlier into the engineering workflow rather than treating it as a downstream audit step.

Google opens Workspace MCP server preview - Google is turning enterprise agents into a governed API and access-control problem, with MCP making the boundary between agent capability and enterprise data policy the next infrastructure challenge for platform teams.

vLLM v0.20.1 ships with DeepSeek V4 stabilization and FP4 improvements - The patch release stabilizes DeepSeek V4 serving and improves FP32-to-FP4 conversion speed.

That’s all for today. Thank you for reading this issue of Deep Engineering.

We’ll be back next week with more expert-led content.

Stay awesome,

Saqib Jan

Editor-in-Chief, Deep Engineering

If your company is interested in reaching an audience of senior developers, software engineers, and technical decision-makers, you may want to advertise with us.

Thanks for reading Packt Deep Engineering! Subscribe for free to receive new posts and help grow our work.